What is scalability in cloud computing?

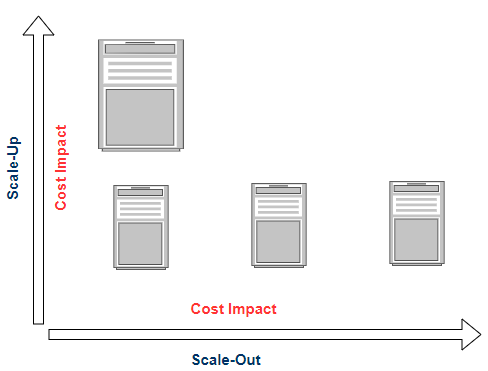

Scalability is one of the primary attributes to keep in mind, while designing or developing your software product. It is a measure of the system’s ability to efficiently handle the users’ requests with an increase or decrease on demand. Scaling helps to manage a good balance between performance and cost by Scaling up (adding more resources to handle peak workloads) and Scaling down (removing resources when the workload is less) of the system.

For example, during the festive season many E-commerce companies provide great discounts to increase sales, so to manage more user requests software/system requires increasing the resources like servers and storage on demand.

Scalability in cloud computing provides the ability to manage increase or decrease IT resources on demand of business requirements. First, you need to determine the resources that need to be scaled, like Servers, database, storage, etc. Once the resources are identified, you can apply scaling configuration/policy to scale your application. You can choose automation/Auto Scaling to optimize the cloud scalability.

Types of Scaling in Cloud Computing

Vertical scaling

It is also known as Scaling Up OR Scaling Down.

Scaling Up – Add more powerful CPUs, RAM, and memory to the existing server to manage the increased workload.

Scaling Down – Remove resources from the existing server when workload decreased.

If workload/requests keep increasing, then this type of scaling is not a good option, as vertical scaling has an upper limit to add resources on the existing server. Vertical Scaling takes time to re-size the server, and during the re-sizing of the server, the application may go down or unavailable for the end user.

Horizontal scaling

It is also known as Scaling out or Scaling in.

Scaling out – Add additional resources to the infrastructure, it helps to replicate applications to work in parallel.

Scaling in – Remove resources from existing infrastructure.

3 ways to scale in cloud environment

Manual Scaling

Manual scaling requires an individual or team member to monitor the application and add or remove the servers as per the demand. This type of scaling can lead to human error.

Scheduled Scaling

With Scheduled scaling, you can setup scaling as per the predictable load without the involvement of individual or team members.

For example, if you know the traffic to your application increases on weekends (Saturday and Sunday) and it is requiring 10 more servers for handling traffic, then you can configure scheduled scaling (rules with specific date-time) to scale out 10 servers for weekends and scale in automatically on Monday.

Auto Scaling

With Auto-scaling you can scale out/in resources (servers, database, storage, etc.) automatically based on the pre-defined threshold for the metrics such as memory usage, network usage, CPU utilization. You can define Auto scaling policies to enable high availability of applications.

For example, if the workload increases to your existing infrastructure, you can configure the Auto Scaling, to launch a new server automatically, if CPU utilization is greater than 70% for continuous 3 minutes. Remove the server again, when the workload is reduced. This kind of dynamic scaling helps to optimize the resources and cost utilization.